Recently, I’ve made some DevOps Dataverse Pipelines. I’m a bloody beginner in this area, but I had a vision about how it should work. “A man with a plan”. So I’ve googeled quite a few, and I’ve found helpful blogs from Tae Rim Han, Benedikt Bergmann and Ryan Spain (I’ll post all the links on the bottom of this blog). But it was not exactly what I was looking for.

The requirement for DevOps Dataverse

- Both customizing and PCF

- The PCF can be deployed and used stand-alone, but it is also a part of a more complex solution, containing also tables (entities), forms, views, JS webResources.

- Code first approach allowing branching

- I’ve seen quite a few Build Pipelines using the source control only as a place to track changes about the customization. Which is a good idea. But as a developer I don’t want to deploy the components from an environment. Maybe I’ve developed the Component using Fiddler Autoresponder and the latest version of the component is not uploaded. Or maybe I work for more features, using several branches, but I want to make a release with only a few features. Then I won’t be ablte to extract the solution from the environment, since I cannot control the content.

- Maybe this approach doesn’t allways fit, but for a solution where the code is the important part and the customization is on the second place, I want to deploy using the code, not an environment. I guess this works the same for plugins, not only for PCFs.

- Build all kind of solutions

- I need a solution containing only the PCF

- I need a solution containing both customizing and PCF

- The above solutions should be generated as managed, unmanaged, release und debug versions.

- Versioning

- The versions of the above solutions and PCFS should be in sync. In my case, I’ll take the PCF manifest version to keep track of the version. Then I’ll generate the solutions using this version. There are some other approaches too; like use a file for tracking the version, and apply it to all the solutions and PCFs. That could be usefull when more PCFs are involved.

- I don’t want to define the version as a DevOps environment variable, and I don’t want to read the version from the environment. It should be a clear versioning, each version reprezenting new features added to the product.

Project setup

I have three projects:

Step 1.1 The PCF project (.pcfproj) created using the “Power Apps CLI”

pac pcf init

npm install

npm run build

Step 2.1 A subfolder “Solutions” containing two projects

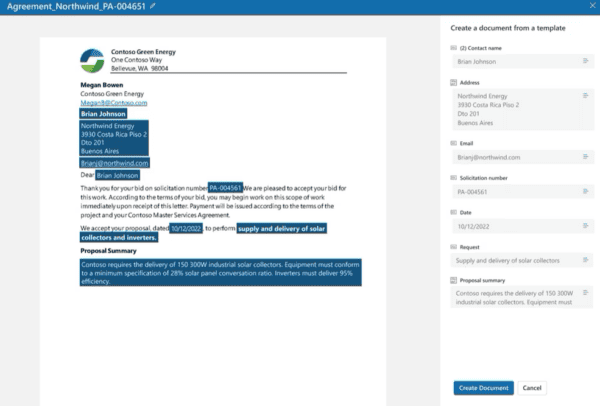

CDS Project (.cdsproj) “Complete” : this one is created using “pac solution clone” and by adding the reference to the pcf project

Solutions>pac solution clone -n Complete

cd Complete

Complete>pac solution add-reference -p ..\..\

Step 2.2 After that, open the “.cdsproj” generated, uncomment the <SolutionPackageType> and change it to “Both” instead of “Managed”. That’s because I want to generate both managed and unmanaged solutions, as described in my other blog.

Step 2.3 Now you can test that the solution can be build, by using a command line, navigating in this folder, and calling

msbuild /t:restore

msbuild

msbuild /p:configuration=Release

Step 3.1 CDS Project (.cdsproj) “PCFOnly“: This one is created using “solution init” and by adding the reference to the pcf project

Solutions\PCFOnly>pac solution init -pn <PUBLISHER NAME> -pp <PREFIX>

pac solution add-reference -p ..\..\

Step 3.2 Repeat the step 2.2 for this project too: configure the “.cdsproj” to generate both managed and unmanaged solutions.

Step 3.3 Repeat step 2.3 in the folder “PCFOnly” and check if it builds.

Even if CDS was renamed to Dataverse, the CDS Project type (.cdsproj), created using Power Apps CLI, is still named CDS Project.

The Power Apps CLI (pac) works a bit different for “solution clone” compared to “solution init”.

For “clone” you need to be placed in a parent folder. It will create a subfolder for the solution.

For “init” you should create the folder beforehand and move there. Otherwise you can use the new “-o” parameter, where you can specify where the project should be placed.

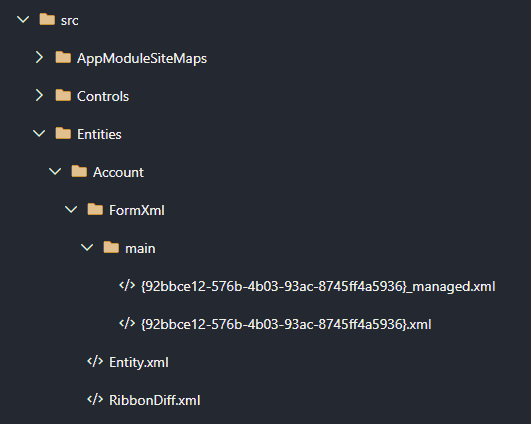

When we use “pac solution”, we will get a “.cdsproj” file and a “/src/Other” folder where the solution elemenents are placed. Using “pac solution clone”, we get also a lot of other subfolders in the “src” like:

– AppModuleSiteMap

– Controls

– Entities

– OptionSets

– WebResources

…

Implementation

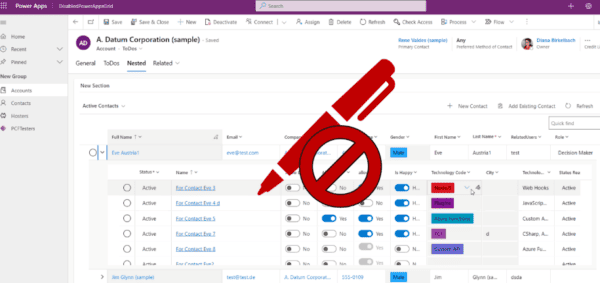

I made 2 Build Pipelines: one for refreshing the cloned CDS solution if needed. The second is a pure build pipeline, based only on pcfproj and cdsproj. The second one doesn’t need any environemnt in order to build and create the solution. ALM for developers

Pipeline 1: refresh the solution clone

So the “Complete” cdsproj is made using “pac solution clone”. This allows me to clone a solution from the environment. But this cannot be executed twice. If I want to execute again the “solution clone”, I’ll get an error, because the folder is already created. But the customizing for my solution might change, and I want to be able to refresh the content. With my first pipeline I allow the technical architect/consultant of the product to make the changes and commit them in my CDS project. It’s pretty much what the most of the Dataverse Pipelines do, with a small difference: the content will be checked inside my “.cdsproj”.

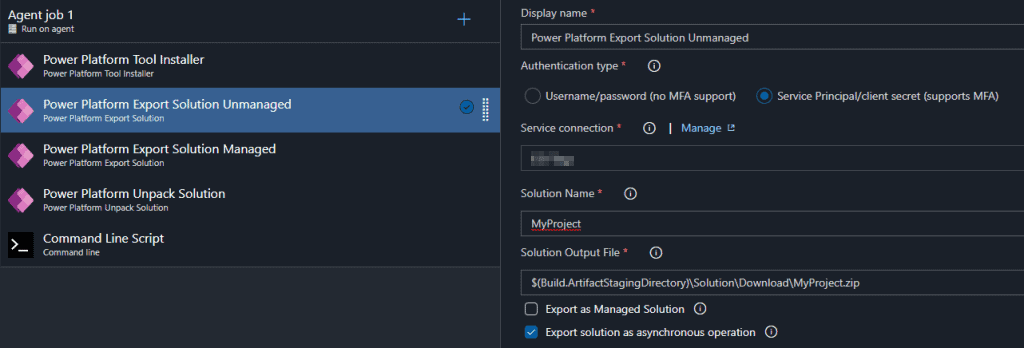

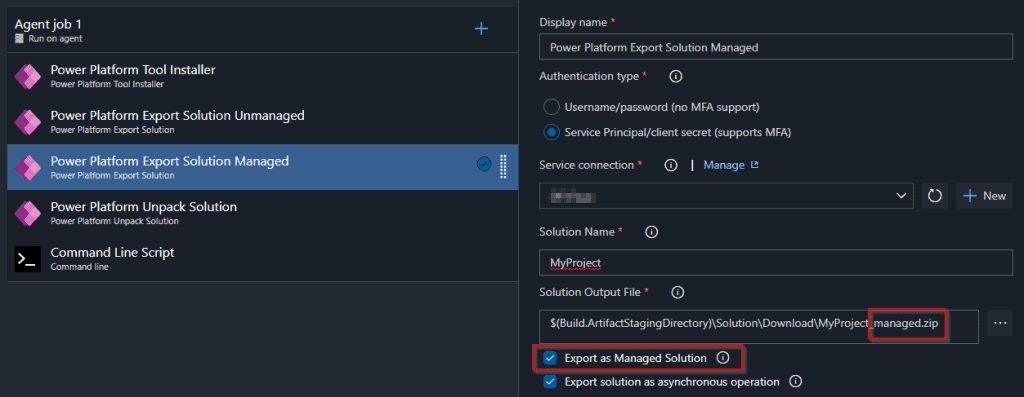

I use the Microsoft Power Platform Build Tools, like described in the docs.

- I want both managed and unmanaged solutions

- I unpack the content of the solution inside my (.cdsproj)/src

Let’s have a look at the files generated by “pac solution clone” inside the “src” folder:

As you see, for each resource, there is a managed and an unmanaged version. I’ll need that too. And that’s how I do it:

Don’t forget to allow the OAuth token, to commit to git

Now the customizing is commited to my cdsproj in the repository.

In the next blog I’ll describe the second pipeline, which increments the version, builds and creates the artifacts.

References

Blogs:

- Using Azure DevOps to Build and Deploy Multiple PCF Controls by Tae Rim Han

- CDS – Basic ALM process by Benedikt Bergmann

- Build and Deploy a PCF Control Using Azure DevOps by Ryan Spain

You Tube

- Application Lifecycle Management (ALM) on the Power Platform | BOD133 – YouTube by Casey Burke, Shan McArthur and Per Mikkelsen.- Funny, I’ve found this when I was done with my pipelines, and some small points are still missing there, but it confirmed that my vision was not that bad. A really great deep dive.

- Maple Power 2020 Power Platform ALM using Azure DevOps Chris Piasecki

Docs:

About the Author:

Hi! My name is Diana Birkelbach and I’m a developer.

I’m a lead developer at ORBIS AG , and together with the team we develop generic components and accelerators for Dynamics 365.

I started with web development with ASP (VB) in the old days before the .NET was there. For better performance I used a lot of JavaScript and TSQL. Later on I moved to ASP.NET and C#. Learn more.

Reference:

Birkelbach, D. (2020). DevOps Dataverse ALM using Power Apps CLI: a source centric approach. Available at: https://dianabirkelbach.wordpress.com/2020/12/06/devops-dataverse-alm-using-power-apps-cli-a-source-centric-approach/ [Accessed: 7th January 2021].